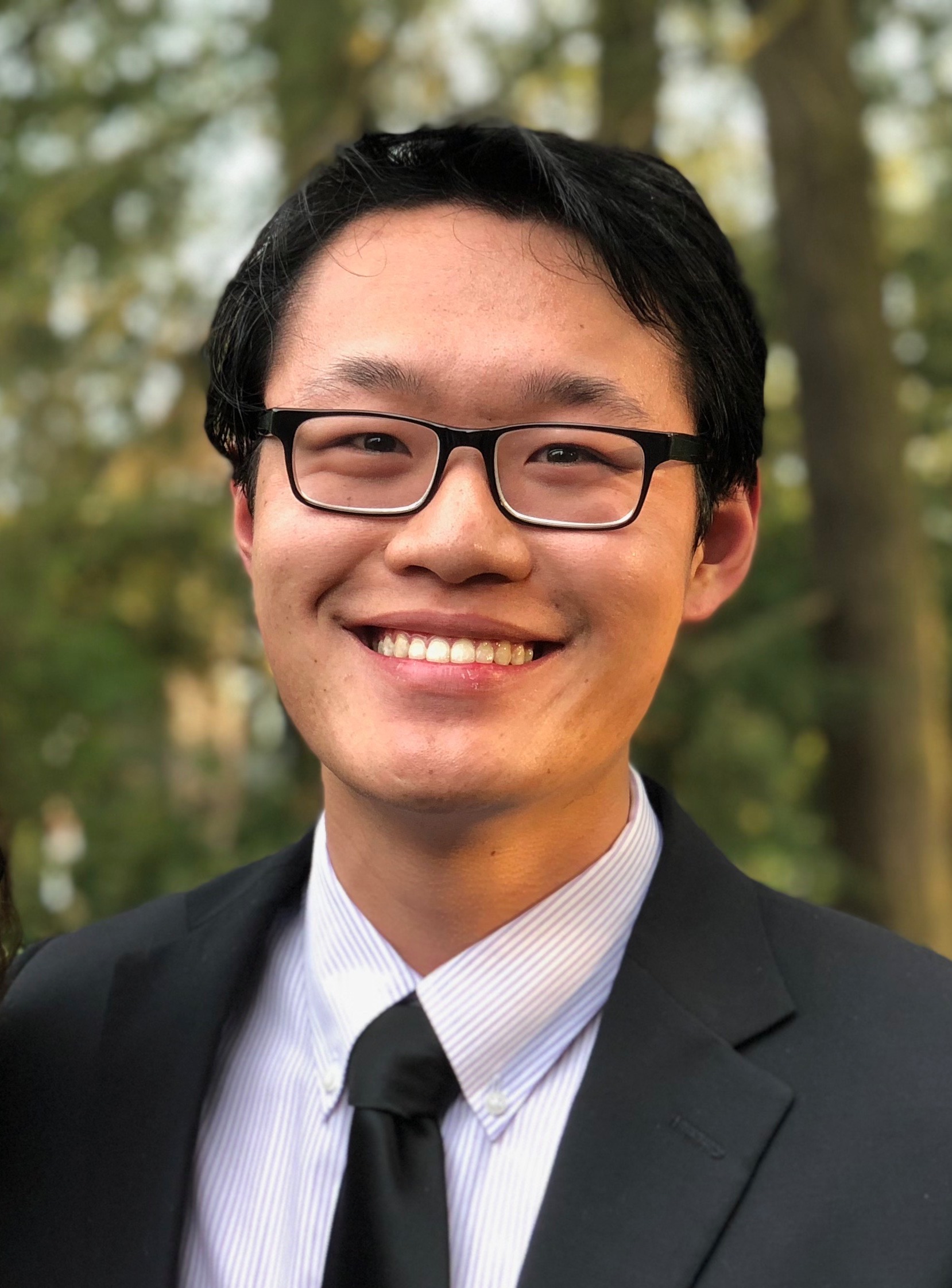

Hello! My name is Gene Li. I am a quantitative researcher at Two Sigma.

I obtained my Ph.D. from the Toyota Technological Institute at Chicago, a philanthropically endowed CS research institution. I was fortunate to be advised by Nathan Srebro.

Previously, I graduated with a BSE from the Electrical and Computer Engineering department at Princeton University. There, I had the pleasure of working with Yuxin Chen and Emmanuel Abbe.

Research Interests. During my Ph.D., I worked on theoretical machine learning and decision making. I primarily thought about function approximation for reinforcement learning in large state spaces, with the goal of characterizing the fundamental limits for these problems and understanding new algorithmic paradigms. For more details, you can read my Ph.D. thesis.

If you want to get in touch, you can reach me at: [firstname] at ttic dot edu.

You can find my (perpetually outdated) CV here.

Publications [ ]

]

α: denotes alphabetical author order, as is customary in theoretical computer science.

- Learning to Answer from Correct Demonstrations

Nirmit Joshi, Gene Li, Siddharth Bhandari, Shiva Prasad Kasiviswanathan, Cong Ma, Nathan Srebro.

ICLR 2026. - The Role of Environment Access in Agnostic Reinforcement Learning

α: Akshay Krishnamurthy, Gene Li, and Ayush Sekhari.

COLT 2025. - Optimistic Rates for Learning with Label Proportions

Gene Li, Lin Chen, Adel Javanmard, and Vahab Mirrokni.

COLT 2024. - Dueling Optimization with a Monotone Adversary

α: Avrim Blum, Meghal Gupta, Gene Li, Naren Sarayu Manoj, Aadirupa Saha, and Yuanyuan Yang.

ALT 2024. (Outstanding Paper)

Short version appeared at OPT ML Workshop, NeurIPS 2023. (Oral Presentation) - When is Agnostic Reinforcement Learning Statistically Tractable?

α: Zeyu Jia, Gene Li, Alexander Rakhlin, Ayush Sekhari, Nathan Srebro.

NeurIPS 2023. [Talk at RL Theory Seminar] - Pessimism for Offline Linear Contextual Bandits using \(\ell_p\) Confidence Sets

Gene Li, Cong Ma, Nathan Srebro.

NeurIPS 2022. [Poster] - Understanding the Eluder Dimension

Gene Li, Pritish Kamath, Dylan J. Foster, Nathan Srebro.

NeurIPS 2022. - Exponential Family Model-Based Reinforcement Learning via Score Matching

Gene Li, Junbo Li, Anmol Kabra, Nathan Srebro, Zhaoran Wang, Zhuoran Yang.

NeurIPS 2022. (Oral Presentation) [Poster]

Teaching

- Convex Optimization - March 2024

Crash course co-taught with Naren Manoj at TTIJ. - Statistical and Computational Learning Theory - Winter 2023

Instructor: Prof. Nathan Srebro, TTIC.

Misc

Some notes on learning theory. These notes were mostly for my own personal reference as I was learning the subject, but others may find them useful. Any mistakes are my own.

My sister Greta Li is an aspiring physicist.

I am a long-time (often suffering) fan of the Tennessee Titans.